Human-in-the-loop data validation

We moderate, evaluate, and validate AI training data or content to ensure high-quality AI data to train your ML models.

The challenge

Data validation is not only essential for ensuring the quality of AI training data, but also the performance of any AI application.

It is arguably the most important step in machine learning. Without proper validation, poor data can infiltrate your model, corrupting the entire training process. For that reason, exhaustive quality assessment (QA) checks of AI data are crucial, not only to ensure your model’s accuracy, but also its relevance, appropriateness, and proper optimization.

The solution

TrainAI from RWS provides complete, end-to-end automated and human-in-the-loop data validation services to ensure your AI model is trained on accurate data you can depend on. We carefully select the right validation experts to meet the unique training needs of your AI model, so you can rest assured that the data we deliver to train your ML model is of the highest quality, while representing the cultural, linguistic, and geographic nuances of your AI project.

TrainAI provides comprehensive human-in-the-loop data validation services for today’s large language models (LLMs), generative AI, augmented intelligence, deep learning models, and more.

TrainAI human-in-the-loop data validation services

Annotated data validation

TrainAI data specialists can validate any annotated dataset. This service is especially useful for validating crowdsourced annotations, pre-annotations from an existing model, or more generally, for any dataset whose labels have low confidence scores.

Search evaluation

TrainAI’s search evaluators help improve search engine performance by:

- Assessing the accuracy and relevance of search results based on specific queries or user intent, considering factors such as query interpretation, semantic understanding, and contextual relevance

- Identifying instances of spam, irrelevant, low-quality content, as well as content that violates guidelines or misleads users

- Evaluating the performance of search engine features and functionalities such as autocomplete, query suggestions, related searches, and knowledge panels

This feedback is then used to train search engines to more effectively deliver relevant, accurate, and useful information to users.

Ad evaluation

Our ad evaluators assess the relevance, quality, and compliance of online ads for advertising AI models by:

- Evaluating the relevance of online ads to search query or user intent, ensuring that ads match user expectations and content context

- Reviewing ads to ensure they adhere to ad policies, legal guidelines, and ethical standards, identifying potential violations and inappropriate content

- Providing feedback and ratings on ad quality, user experience, and compliance

This feedback helps advertising AI models improve ad recommendations and placement, enhancing the effectiveness and user-friendliness of online advertising.

Content evaluation

We audit various types of content, reporting on criteria such as quality, usability, findability, readability, and accessibility, to help you better understand your content and how it is used by your customers.

Content moderation

With human-in-the-loop processes, we validate and categorize AI training data for content moderation models. We boost the accuracy of your AI content moderation solutions by validating the decisions made by your engine.

Geospatial data evaluation

Using our data validation processes and tools, and our community of AI data specialists, TrainAI can help improve the accuracy and quality of geospatial data.

Types of AI data delivered by TrainAI

Text data

Audio / speech data

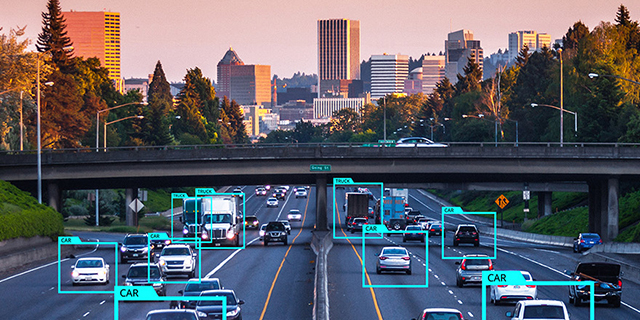

Image data

Video data

Locale-specific data

Synthetic data

Our TrainAI community

Instead of crowdsourcing your data needs to anyone and hoping for the best, we deliver AI training data collected, annotated, and validated by our TrainAI community of active, vetted, skilled, and qualified AI data specialists based on your specific ML project requirements.

community members

language pairs and variants

countries

Related resources

Customer stories

Let's connect

Connect with our TrainAI team to discuss your AI training data needs or submit a TrainAI community support request.

For business inquiries only. Community-related inquiries submitted via this form will not receive a response. Please click ‘Community support’ to submit your community request.

Loading...