Key benefits

- Accelerated time-to-market with scalable synthetic “golden” data

- Ensured multilingual AI accuracy across complex grammar systems

- Reduced misclassification through prompt engineering and human validation

- Enabled iterative refinement as requirements evolved

- Built a reusable framework for future AI training initiatives

Building an intelligent storytelling engine

When a leading consumer technology brand wanted to launch a competitive feature that allowed users to ask their phone to create video montages of their personal photos, they knew the AI behind it had to be intelligent.

Truly intelligent.

Users would ask things like “Show me my best moments from the fair last summer, but no pictures of clowns,” or “I want pictures of my dog playing fetch.” The model had to understand intent, parse requests accurately, and work flawlessly across dozens of languages with wildly different grammatical rules.

The challenge wasn't just the scale of the project; it was sophistication. The brand needed training data that captured real human language in all its messy, beautiful imperfections.

That means synthetic training data had to include natural conversational patterns, slang, pronouns that change meaning based on context, named entities and generic keywords that could be the same words as those named entities. This data had to be generated across the declensions of Polish and Russian, the subtle contextuality of French and the nuances of several other languages.

Traditional approaches wouldn't cut it, so TrainAI built something better.

Our approach to synthetic data generation

Building a truly intelligent AI-powered storytelling feature sounds straightforward until you dig into what it actually requires. Users would interact naturally with the system, tossing out quick requests, winding through detailed memories, and mixing specific locations with vague references.

For example, “Create a montage of my pictures from my trip to New York” seems simple enough. However, the system must recognize that the user is referring to *their* specific trip to New York, which occurred at a specific time.

Similarly, “Pics from the mountain” or “Photos with my parents” could be confused as generic references rather than specific ones.

The model also had to learn these distinctions across 20 different languages. That meant creating about 5,000 training examples per language, or 100,000 structured data objects total, each with specific keys and values designed to teach the AI exactly how to parse user intent.

Not hypothetical intent; real user intent, in all its variations.

The team had to construct something that didn't exist yet. We're talking about building a synthetic data framework that would capture the statistical properties of how real people ask for things, while also including the edge cases that trip up most AI systems. This was a foundational piece of training infrastructure for a product millions of users would interact with.

Challenges

- Distinguishing named entities from generic keywords across languages

- Capturing linguistic variation from very short to complex queries

- Managing evolving and undefined client requirements

- Ensuring consistent labeling across multilingual teams

- Balancing automated generation with human oversight

- Covering edge cases that commonly cause AI failure

Solutions

- TrainAI by RWS

- Human-in-the-Loop Data Validation

- Data Annotation and Data Labelling

- Built a standardized multilingual structured data framework

- Refined annotation rules and taxonomy in collaboration with the client

- Created configurable data splits (short/long queries, slang, entity types)

- Implemented iterative human validation feedback loops

- Established multi-layered quality review across all 20 languages

Results

- 100,000 production-ready training objects delivered

- 20-language golden dataset with consistent standards

- 15% → 70% → 99% English pass rate after validation

- Parallelized pipeline producing thousands of outputs per hour

- Reusable framework ready for future AI projects

Evolution of the data generation process

The team centered everything on a clear data structure, using “keys,” such as:

- “Named locations”

- “Keywords”

- “Times”

- “Named entities”

These “keys” are types of variables that can contain values. They formed the backbone of how the LLM would interpret user requests across 20 languages.

A “named entity” needed to be specific and personal to the user (“my dog” or “my parents’ house”), while generic references (“a mountain” or “a dog”) belonged in keywords.

As the project unfolded, guidelines that seemed straightforward at first became more complex, revealing more edge cases than the client had anticipated. The team adapted quickly, refining rules and recalibrating data as they went.

To reduce the time and cost of the project, the team developed a highly parallelized synthetic data generation pipeline, allowing them to launch many LLM requests at once instead of one by one.

By handling these calls concurrently, they could collect outputs, parse and reshape them in code and inject extra logic or randomness from Python. They could do all this before moving to the next reinforcement or evaluation step, without leaving any gaps in the workflow.

This concurrency is especially useful with slower reasoning models, because it hides latency behind parallel work and keeps overall project turnaround fast for clients.

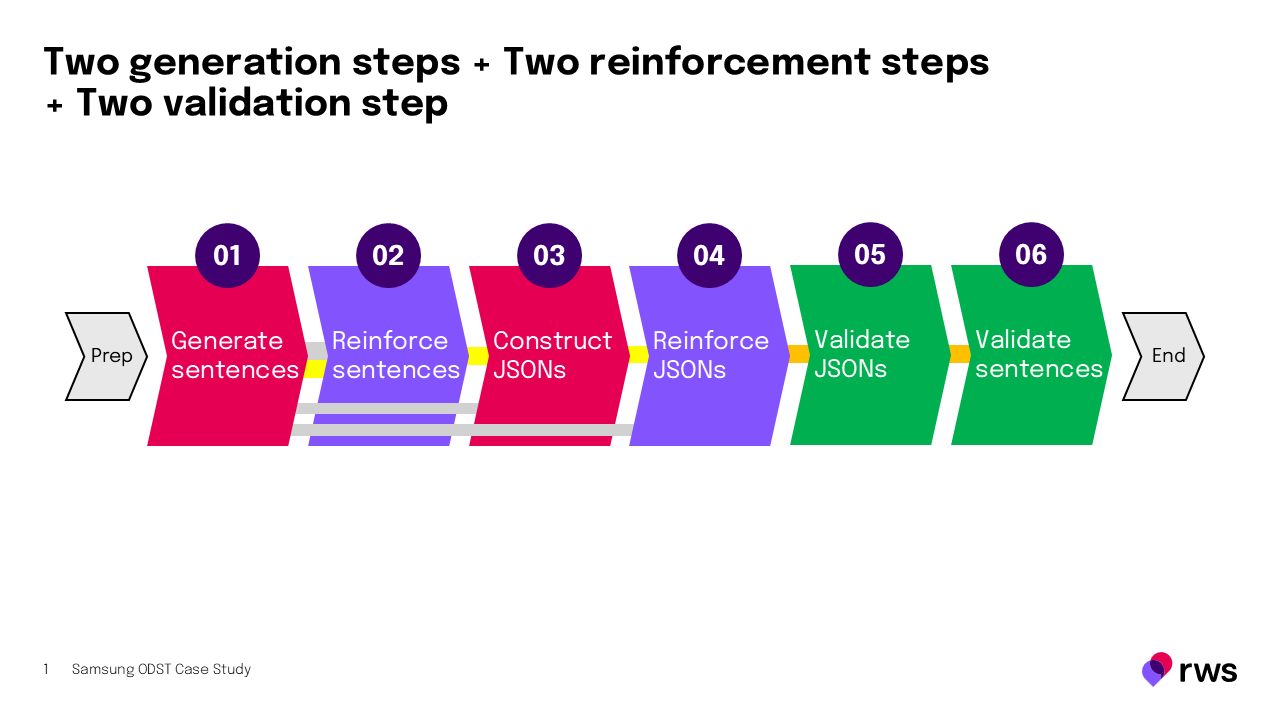

What began with one generation step and one “reinforcement” step eventually expanded into six steps total: two generation steps, two reinforcement steps and two validation steps. It also included the use of two different generations of one generative AI model.

The pass rate for English went from about 15% to about 70%.

Human validators reviewed every output, excluded errors and hallucinations, and turned raw synthetic data into training signals that reflected how people actually speak.

After human verification, annotation and delivery, the pass rate for English reached about 99%!

| Language | Fail ratio | Quality score | Fail ratio after arbitration | Quality score after arbitration |

|---|---|---|---|---|

| Korean | 0.84% | 99.16% | 0.44% | 99.56% |

| Vietnamese | 4.52% | 95.48% | 1.92% | 98.08% |

| English | 0.44% | 99.56% | 0.16% | 99.84% |

By the end of the project, the team had made significant accomplishments. Not only had they captured linguistic variations from short, colloquial queries to long, detailed requests, but they had also delivered human-validated synthetic data spanning multiple query patterns and edge cases.

Most importantly, they had built a complex but highly scalable, parallelized synthetic data generation pipeline capable of producing thousands of valid structured data outputs in a single hour.

Key insights from this work:

- Human verification matters: Humans must review generated synthetic data first, to ensure errors and LLM hallucinations don’t make it into AI training data.

- Edge cases define quality: Synthetic data is only as good as how thoroughly it covers the exceptions and variations

- Flexibility drives results: Requirements evolved because the problem was genuinely complex; the team's ability to adapt enabled real progress

“We are very pleased to see clear, concise and realistic queries. Most of the database looks good. If Korean, English and Vietnamese reviews are carried out with this level of care, we believe the same high quality can be achieved across other languages as well.”

Scaling synthetic data generation for the future

This work created a reusable blueprint. Synthetic data generation at this scale and sophistication is a capability, not simply a one-off project. The framework can be adapted for other AI projects, which can include other languages and domains.

Furthermore, this project demonstrated the value of smaller, more specialized models. They can be trained directly on “golden” synthetic data batches that have already been fully validated by native speakers, turning high-quality structured data objects into precise training signals.

Future projects could use a network of small, fine-tuned models for each step. This could reduce overgeneration, cut waiting time, and lower costs while maintaining high pass rates for downstream human annotators.

That’s one of the advantages of working with TrainAI by RWS: We don't just deliver synthetic data; we deliver innovative, repeatable frameworks for generating it in the future.