Building machine translation (MT) for low-resource languages is a challenging task. This is especially true when training using neural MT (NMT) methods that require a comparatively larger corpus of parallel data. In this post, we review the work done by Zoph et al. (2016) on training NMT systems for low-resource languages using transfer learning.

Transfer Learning

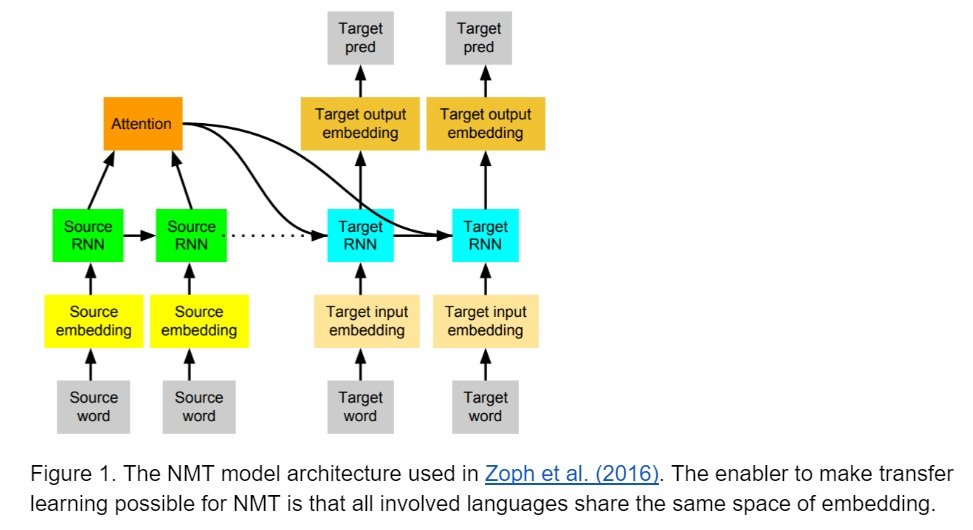

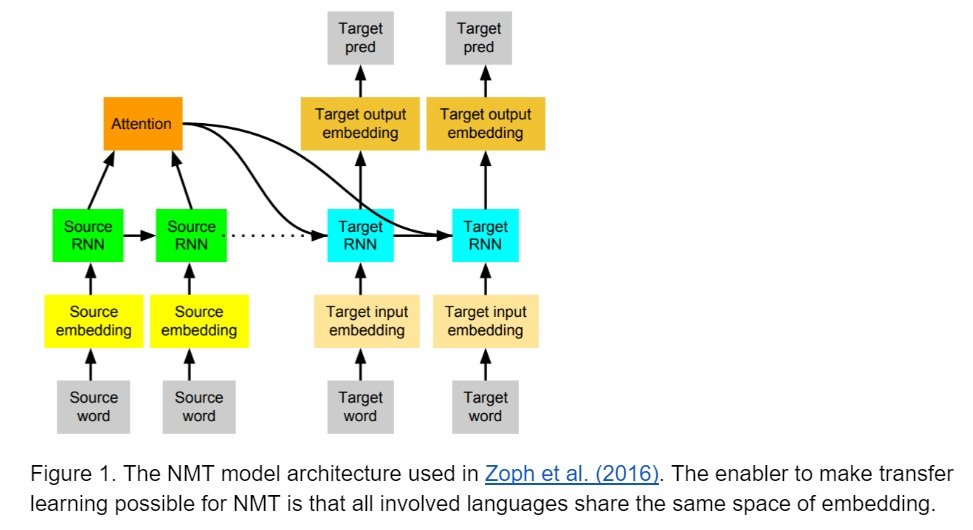

The idea of transfer learning is that we can take advantage of a trained model, to improve the performance of a related task. The knowledge is transferred to a new model from a pre-trained model, and the two models are not necessarily built for the same machine learning task. In most applications, the two models are dealing with the same task and the main goal is to reduce the size of training data required to train a well-performing model. In NMT, transfer learning is training a model (child) with a small amount of parallel corpus, e.g. Uyghur-to-English, with a pre-trained model (parent), e.g. Turkish-to-English, as the initial parameters in the training. The idea is that the knowledge preserved in the Turkish-to-English model could be transferred to the newly trained Uyghur-to-English model. The main benefit of this learning strategy is that the required training data of the new model could be much smaller than the pre-trained model, and we could train a model relatively faster. It should also be noted that there must be some connection between the two models. In NMT, the connection is that all the languages involved, i.e. Uyghur, Turkish and English, share the same space of embedding.