Standards are an important part of most industries, but that’s an egregious understatement. Without standards, industries couldn’t reliably produce, procure, trade or even have a market to sell to. A better assertion would be that without standards, industries wouldn't be healthy, sustainable entities. And they might not exist at all.

Or as ISO describes on their home page, standards “make things work.”

Like plumbing and electricity, we tend to take standards for granted. We depend on them, but we hardly notice or think about them until they’re absent. In this regard, I’ve come to think of standards as a form of invisible architecture.

I’ve found this view of standards to also hold true in the language industry. As a localization professional, I’ve been relying on standards to deliver solutions for a quarter century, but it wasn’t until I became more involved in relevant localization and globalization standards that I recognized their importance to the industry’s well-being.

Taking a closer look at language industry standards can be worthwhile even if you’re not directly involved with their creation or implementation. Understanding not only how they work, but why they were created can help make sense of a sometimes-mysterious landscape and reveal opportunities for improvement.

Imagine a world without standards

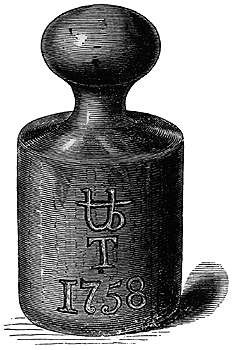

The “Troy Pound,” above, a unit of mass that originated in 15-century England, is still used in the precious metals industry.

David Filip is a language industry veteran and champion for open standards. He once told me, “You can’t imagine what it would be like to live in a world without standards.”

It’s an interesting “what if” game to play. To start, there would presumably be no currency, so trade would be limited to barter, which would be further limited to only those things that one could create by hand. Getting to trade centers itself would also be an uncertain proposition. Would the road be wide enough for your cart when there are no width standards?

There’s a great, early episode of the Radiolab podcast that describes life in the American Midwest in the 1800s before the practice of standardized timekeeping. Prior to the introduction of the railroad system, the clocks in banks, saloons and shops as well as people’s watches were set against the general rising and setting of the sun.

As these towns became connected through a network of rail lines, the absence of a time standard emerged as a problem. How to develop route paths with connections if one train’s “late” is another train’s “early?” It was a problem that ultimately required the adoption of the time standard “rail time.” Today, the world (and the trains) use Coordinated Universal Time.

We’ve had our own pre-standardization chaos in the language industry, too. I remember when text had to be translated before the wide adoption of Unicode; every language and platform had its own encoding permutations. A paragraph of text in Czech would need to be encoded in Windows-1250 if it was to be read on a Windows machine, while for the Mac it would need to be “Code Page 10029.” Back then, we were constantly transcoding content, and character corruption was frequent.

It was a problem made worse by the fact that a lot of translation was done within Microsoft Word using the RTF format, a changing protocol that made encoding decisions based on the name of the font used when typing the text. Since fonts themselves could have different encoding variants, and people often did not have the same fonts on their computers, text that looked perfect in one instance of Microsoft Word could appear corrupted in another. Yikes!

Fortunately, RTF as a bi-text format was replaced by XLIFF. That we hardly ever run into character corruption today is thanks to the process standardization made possible by Unicode and XLIFF.

Digging into language industry standards

Unicode and XLIFF are famous (as far as language industry standards can be famous). You may also be familiar with the standards TMX, TBX and SRX (Segmentation Rules eXchange)—for the exchange of translation memory content, terminology assets and segmentation rules, respectively—developed by the now defunct Localization Industry Standards Association (or LISA).

Here are few that you might not be familiar with.

XLIFF 2

XLIFF 2 is a version of XLIFF, but it is technically its own standard because it isn’t backward compatible with its predecessor. XLIFF 2 is the result of years of real-world application of XLIFF, and therefore a response to some of XLIFF’s limitations. It provisions for greater extensibility (through modules), including the Metdata Module, a valuable capability in the context of content enrichment.

I call out XLIFF 2 explicitly because I believe that as an industry—because we did a good job supporting the earlier XLIFF—we’re under the misapprehension that we’re already “standardized.” XLIFF 1 is certainly better than no XLIFF, but XLIFF 2 is better that XLIFF 1. There’s more work to be done.

TAPICC

I’ve written and spoken about TAPICC (Translation API Cases and Classes) previously, as it is a standards-oriented project that I’m passionate about. TAPICC is an initiative sponsored by GALA (the Globalization and Localization Association) that seeks to standardize the API methods used by various CMS, TMS, LQA tools and other systems that need to exchange information during localization.

RWS Moravians have served in leadership positions in various TAPICC working groups and contributed to best practices (some of which have gone on to be codified in OASIS).

MQM/DQF

The Multidimensional Quality Metrics (MQM) standard and the MQM-harmonized Dynamic Quality Framework (DQF) from TAUS constitute a framework by which as an industry we can define and quantify language quality using, well, the same language. You can find the stamp of RWS Moravia’s involvement on these initiatives as well.

Internationalization Tag Set (ITS)

The Internationalization Tag Set is a way of enriching XML and HTML documents with information that supports the localization services effort (or can enrich such documents about the localization effort).

In our interview with Professor Dave Lewis from the ADAPT Research Centre on the subject of the Provenance of Global Content, Dave described some of the work he’s done to support the notion of provenance tracking within the tag set.

Why we support standards

RWS Moravia believes in actively supporting and promoting localization-related standardization efforts, especially open standards. Why?

It’s in our best interest to see such initiatives succeed. Standards are designed to eliminate cost and risk associated with unnecessary variation within a system (read “waste”). Every client’s dollar that we save from being wasted can contribute to a different facet of their localization program, making our services inherently more valuable.

Also, open standards support interoperability and in turn, operational freedom, characteristics which are fundamentally important to providing our clients with optimal, best-fit globalization solutions that may include lots of different kinds of services and technologies. It’s easier for us to create robust, flexible and innovative solutions when the systems involved use open standards.

Lastly, by being actively involved with standards initiatives, we’re able to influence them—a form of predicting the optimal future by creating it. For us, this kind of active participation is a win-win-win: the entire industry becomes more efficient, our clients can buy more value with their localization dollar and we’re empowered to build more compelling, and ultimately more valuable, solutions.

Tags:

Industry Insights

Author

Lee Densmer